Operationalizing Expert Preferences for Model and Agent Evals

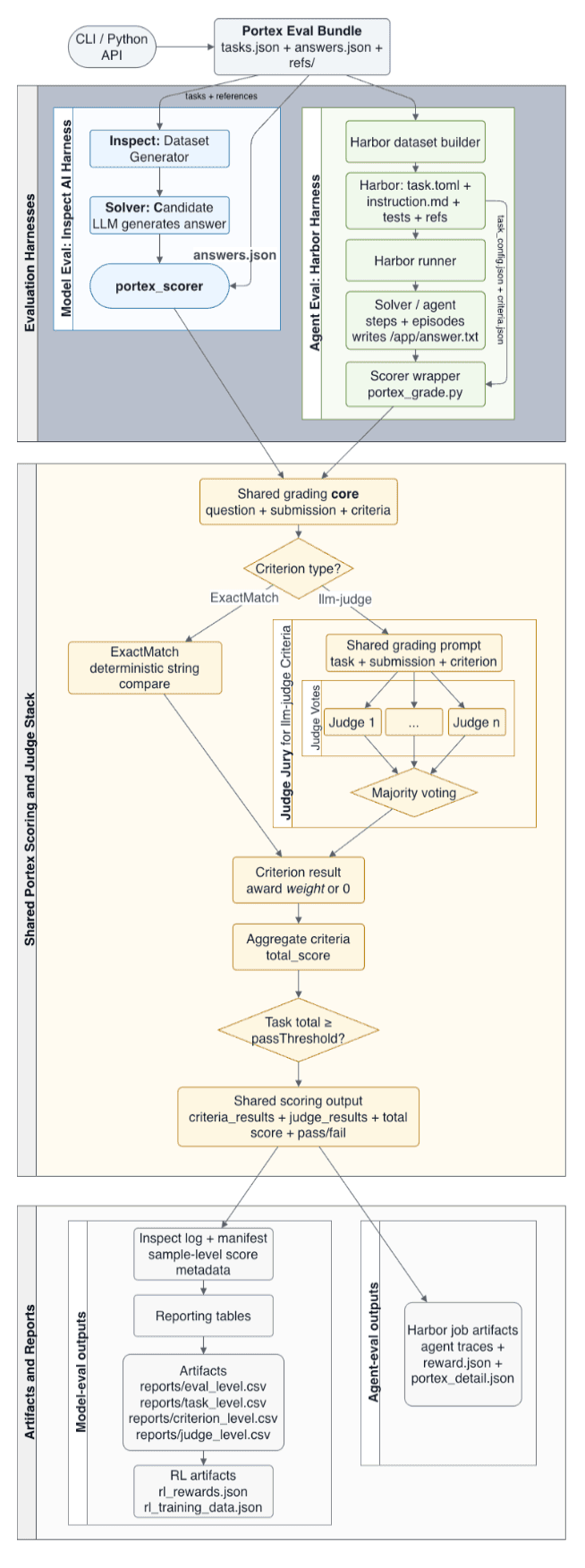

We introduce AsymmetryZero, a framework that formalizes expert requirements as semantic eval contracts for both model and agent runs. Using Harbor benchmark experiments, we show why cheaper compact judge juries are promising for outcome monitoring but still weaker when criterion-level auditability matters.

Evaluating Agents on Portex with Harbor

This post introduces Portex’s Harbor integration, which enables agentic evals in realistic sandbox environments with configurable tasks, tools, and grading. It shows how users can test and audit agents on longer-horizon workflows that better reflect real-world use.

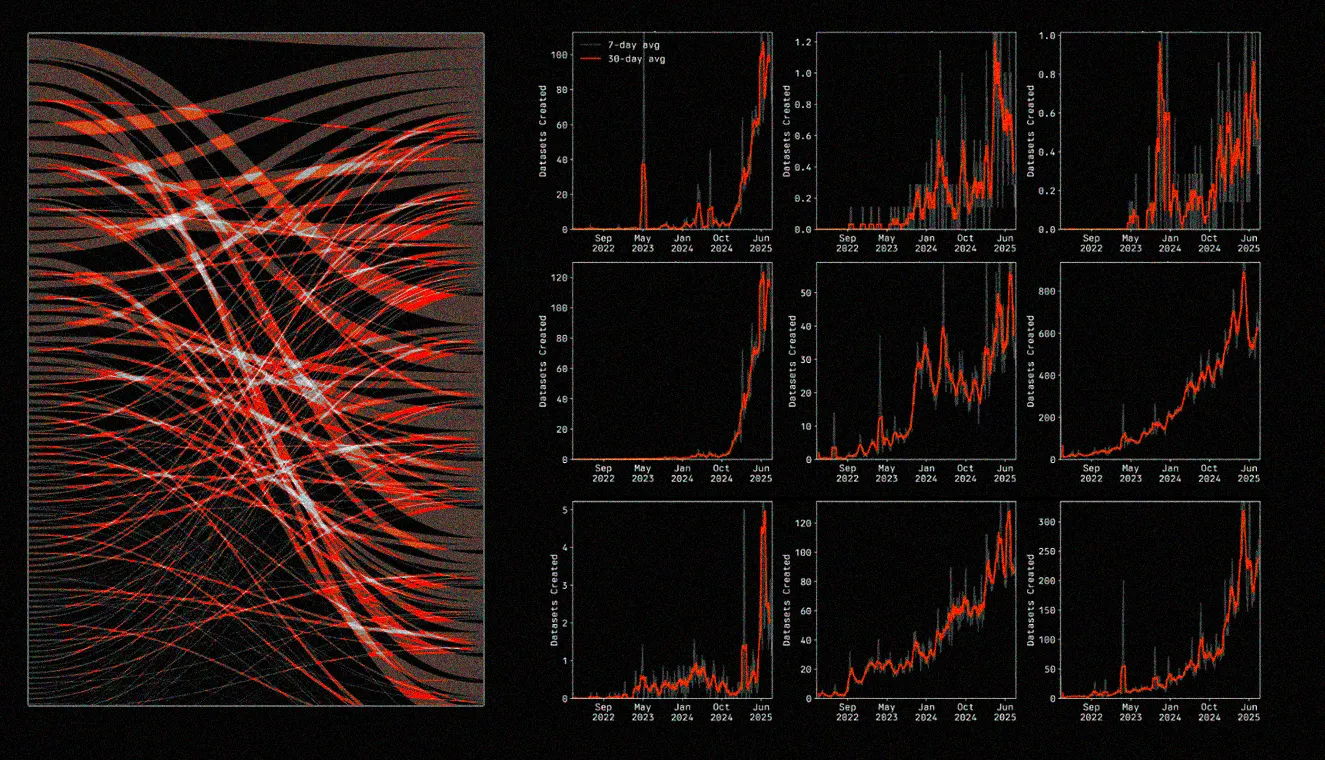

Data Valuation as a Foundation for AI Progress

As AI labs race for scarce, high-value data, old brokerage models are starting to crack. This post makes the case for transparent data valuation and market design as the infrastructure needed to unlock the next phase of AI progress.

What Hugging Face Reveals About the Future of Model Fine-Tuning

Hugging Face’s growth shows that AI’s bottleneck is no longer access to capable base models, but access to the right data for fine-tuning them. The post explores why specialized, rights-cleared datasets are becoming the key differentiator in a world of cheap, abundant open weights.

Dataset Highlight: Large Scale Sybil Detection

Sybil attacks quietly distort airdrops, governance, and user analytics across crypto applications. This post looks into why they persist, where current defenses fall short, and how scalable onchain detection can help restore fairer networks.

Applying Transfer Learning to Bytecode

A walkthrough of a ML pipeline that starts with smart contract bytecode, decompiles it into human-readable code, and uses LongCoder embeddings plus a feed-forward neural network to classify contracts as reputable or malicious. The post makes the case that transfer learning can surface meaningful behavioral patterns directly from decompiled code.